Documentation Index

Fetch the complete documentation index at: https://panopticon-cli.com/llms.txt

Use this file to discover all available pages before exploring further.

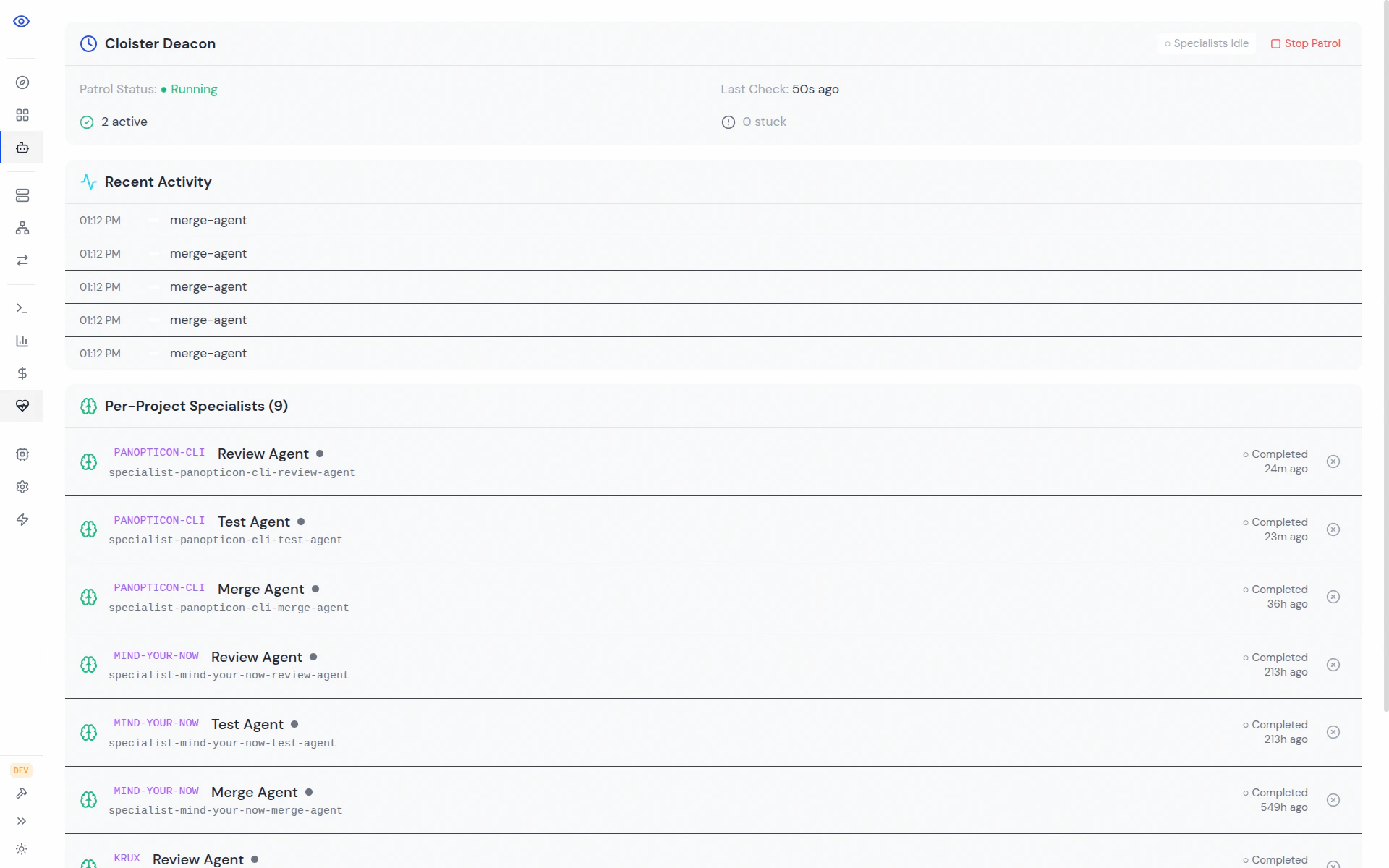

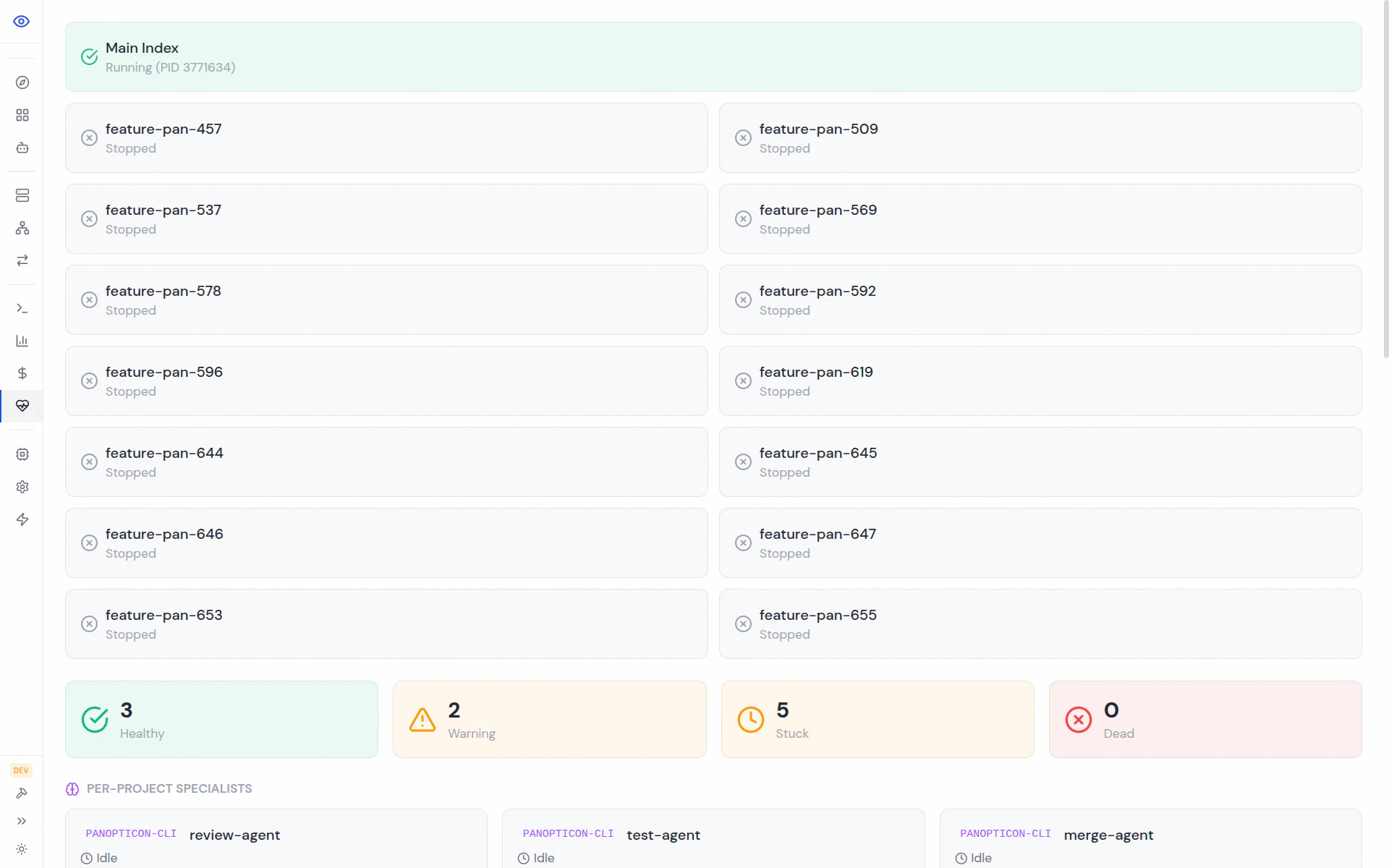

Specialist Agents

Work agents write code. Specialists turn that code into something you can actually merge. They review, test, click through the UI, resolve conflicts, and hand off to each other automatically — you just click Merge at the end.

Overview

A specialist is a focused agent with one job. It takes a completed piece of work, does that job, reportspassed or failed, and either advances the work to the next stage or bounces it back to the work-agent with feedback.

Specialists are per-project and ephemeral — they spawn on-demand against a workspace, do their job, and terminate. There is no global specialist pool to warm up, no long-lived tmux sessions to babysit, and nothing to initialize before you start working.

What makes them different from work agents:

- Narrow scope. Each specialist has one responsibility and a purpose-built prompt.

- Per-project. Spawned against a specific project’s workspace with the right tools and context.

- Queued. If one specialist is busy, new tasks queue up and drain automatically.

- Coordinated. Cloister handles handoffs between stages.

The Five Specialists

| Specialist | Purpose | Trigger |

|---|---|---|

| review-agent | Code review before merge | Human clicks Review (dashboard) |

| test-agent | Runs the full test suite | Auto after review passes |

| inspect-agent | Per-status-change verification | Any specialist reports passed |

| uat-agent | Browser-based acceptance testing via Playwright | Auto after tests pass |

| merge-agent | All merges + conflict resolution | Human clicks Approve & Merge |

Review Pipeline Flow

The happy path is a sequential handoff. A human kicks it off; the rest is automatic until the final merge click.passed, inspect verifies the state transition against the spec before the next stage runs.

Review Agent

The review-agent is the gatekeeper. It reads the diff, the PRD, and the vBRIEF plan, and looks for:- Logic errors and missed edge cases

- Security vulnerabilities (OWASP top 10, injection, auth bypass)

- Performance issues (N+1 queries, unnecessary work, leaked resources)

- Code quality and adherence to project conventions

passed— queues test-agent automaticallyfailed— sends structured feedback to the work-agent, blocks the pipelineskipped— not applicable (e.g., docs-only change)

Test Agent

The test-agent runs every configured test suite for the project and analyzes the failures rather than just reporting pass/fail. What it does:- Runs all configured suites (backend, frontend unit, integration, e2e)

- Diagnoses failures — flake vs. real regression vs. environmental

- Reports results with actionable, file:line-referenced feedback

- On pass, queues uat-agent (if enabled) or marks the work ready-to-merge

passed— advances to UAT or ready-to-mergefailed— feedback with failing test names and excerpts goes back to the work-agentskipped— no test suite applies to this changedispatch_failed— Cloister couldn’t launch the test run (infra issue, not a code failure)

~/.panopticon/projects.yaml:

Inspect Agent

The inspect-agent performs per-status-change verification. Whenever any specialist reports a status transition (review passed, test passed, etc.), inspect re-reads the spec and checks that the new state actually matches what the PRD asked for. Why it matters:- Catches spec drift before it compounds across multiple stages

- Prevents a review-agent that’s too generous from sliding a broken change through

- Keeps the pipeline honest about the difference between “it compiled” and “it works”

/api/specialists/done — it fires on status change, not on a fixed schedule. Enable it per-project:

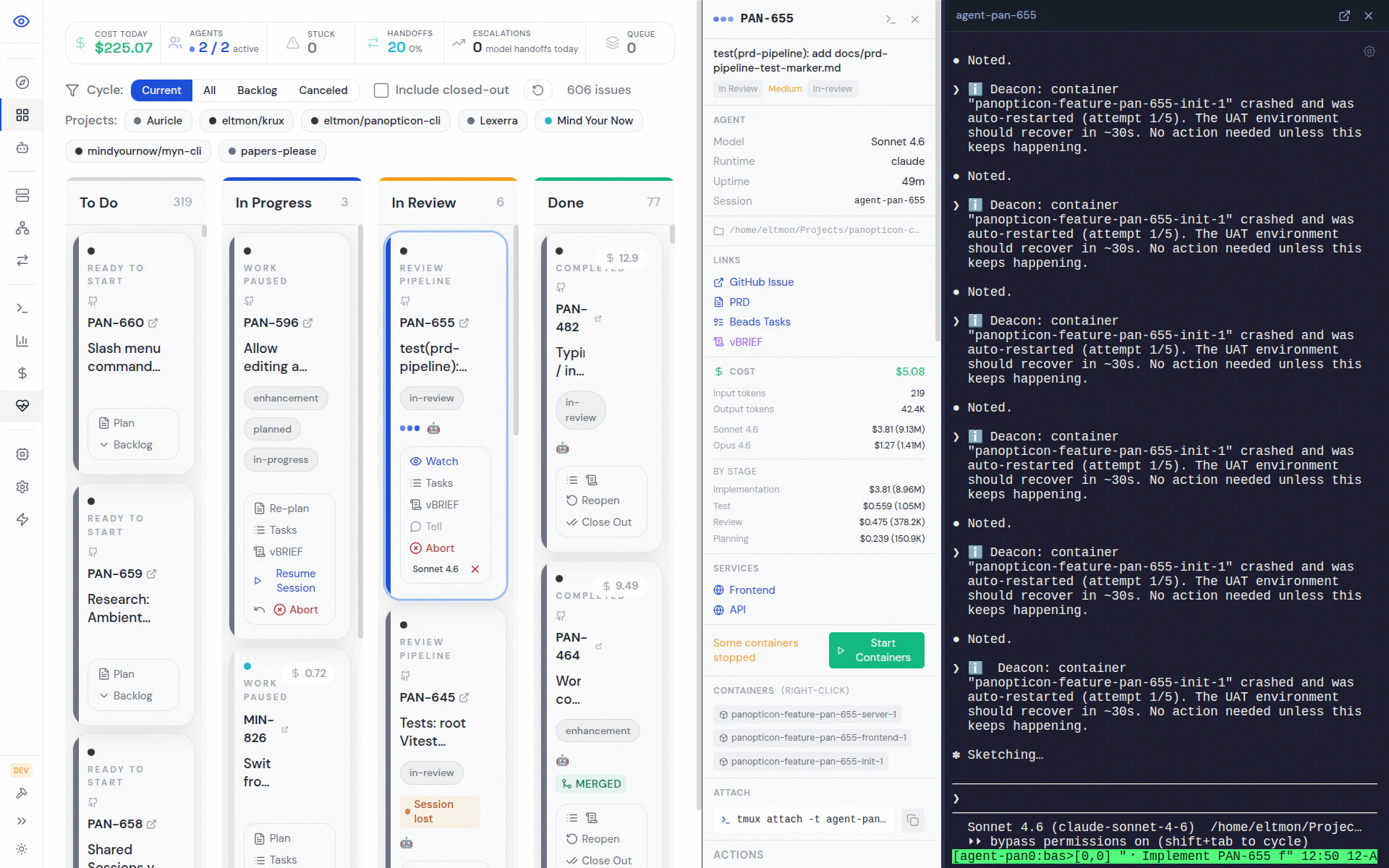

UAT Agent

The UAT (User Acceptance Testing) agent opens a real browser via Playwright MCP and walks through the acceptance criteria like a user would. What it does:- Reads the PRD and the vBRIEF acceptance criteria

- Launches a browser against the project’s dev URL

- Executes each AC as a user flow

- Takes screenshots as evidence (pass and fail)

- On pass, marks ready-to-merge; on fail, sends screenshots + DOM snapshots back

passed— all ACs verified, ready for human merge approvalfailed— feedback includes the failing step, a screenshot, and the observed DOMskipped— backend-only or otherwise UI-irrelevant change

Merge Agent

The merge-agent handles every merge, not just conflicted ones. This is deliberate:- It sees every diff that flows through the pipeline, building context

- When conflicts do occur, it already understands the codebase

- Tests are always re-run post-merge, catching integration regressions

- Pull latest

main - Analyze the incoming diff

- Merge the feature branch

- Resolve conflicts with AI, documenting each decision

- Re-run tests post-merge

- Commit the merge with a descriptive message

- Report results back to the dashboard

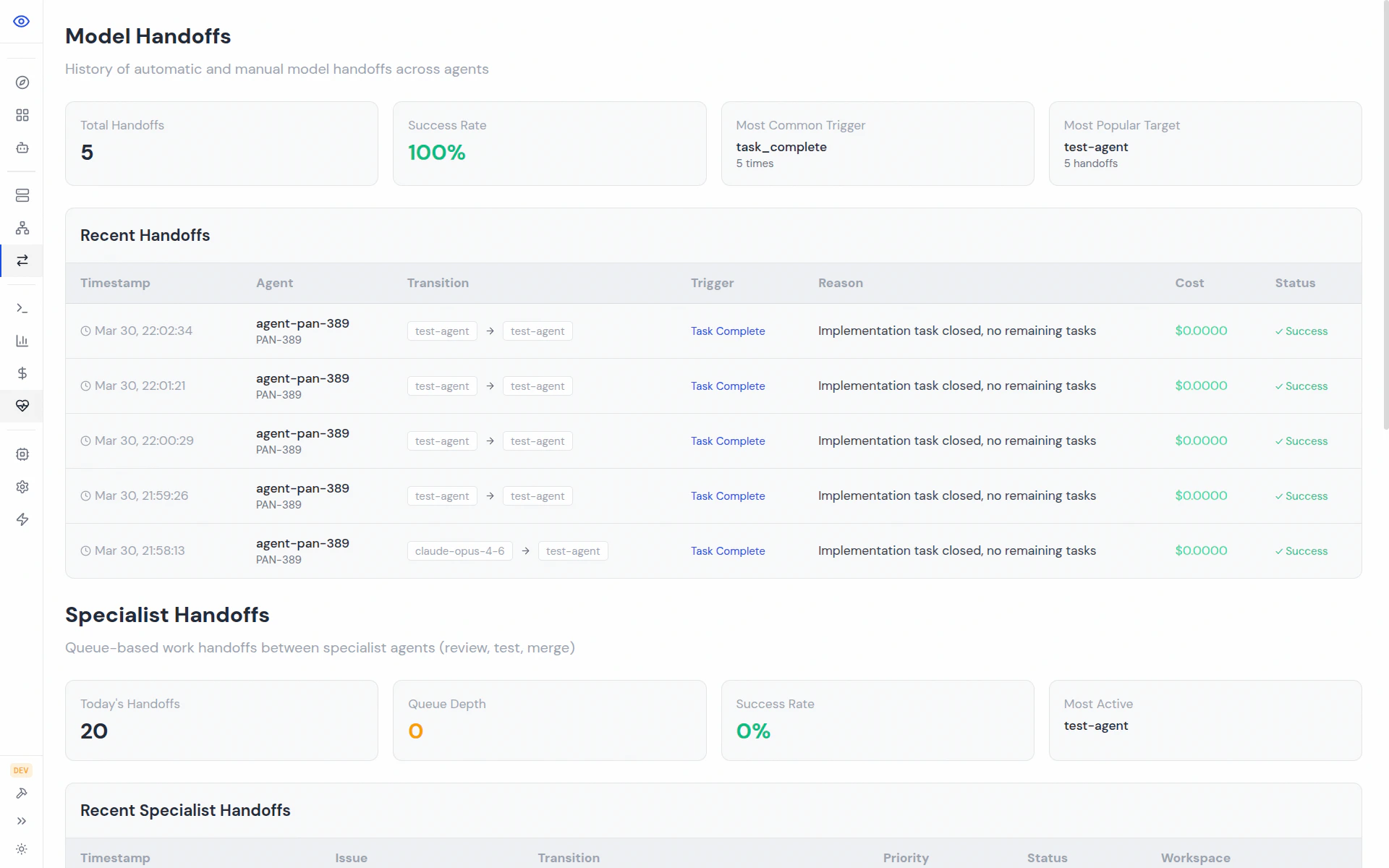

Queue Processing

Each specialist has a per-project task queue at~/.panopticon/agents/{name}/hook.json, managed via the FPP (Fixed Point Principle) — borrowed from Gastown, inspired by Doctor Who: any runnable action is a fixed point and must resolve before the system can rest.

urgent > high > normal > low.

The FPP watchdog notices when a specialist has pending hook work but is idle, and sends escalating nudges until the work resolves.

Agent Self-Requeue (Circuit Breaker)

After a human kicks off the first review, a work-agent can request re-review automatically when it thinks it has fixed the feedback:- First human Review click resets the counter to 0

- Each

pan work request-reviewincrements it - After 7 automatic re-requests, the endpoint returns HTTP 429

- A human must click Review in the dashboard to unstick it

POST /api/workspaces/:issueId/request-review

The constant lives at src/dashboard/server/routes/workspaces.ts:81 (MAX_AUTO_REQUEUE = 7).

Specialist Safeguards

Specialists are constrained to prevent them from corrupting the main project repo:- Spawned at project root — the workspace directory is passed as task context, never by

cd-ing into it blindly - Never checkout branches — they work with whatever branch the workspace already has

- Workspace-first operations —

pan workspace create <ISSUE-ID>is the only way to create new work

- Prompt — clear, repeated warnings in every specialist prompt template

- Code — wake paths validate the target before spawning

- Git hooks —

scripts/git-hooks/post-checkoutauto-reverts any checkout detected inside a specialist tmux session

Configuration

Specialist configuration lives in~/.panopticon/cloister.toml:

enabled— whether the specialist runs at allauto_wake— auto-wake on trigger vs. wait for an explicit wake signal[model_selection.specialist_models]— per-specialist model override (haiku / sonnet / opus)

When to Disable Each Specialist

Specialists are powerful but not free — each one adds latency and API cost. Sensible tradeoffs:- review-agent — almost never disable. The one gate between work-agents and prod.

- test-agent — disable only if your test suite is broken or prohibitively slow. Fix the suite instead.

- inspect-agent — disable for projects without a PRD/spec culture; it has nothing to verify against.

- uat-agent — disable for backend-only services or CLIs with no browser surface.

- merge-agent — keep enabled even for conflict-free projects; it’s your integration test safety net.

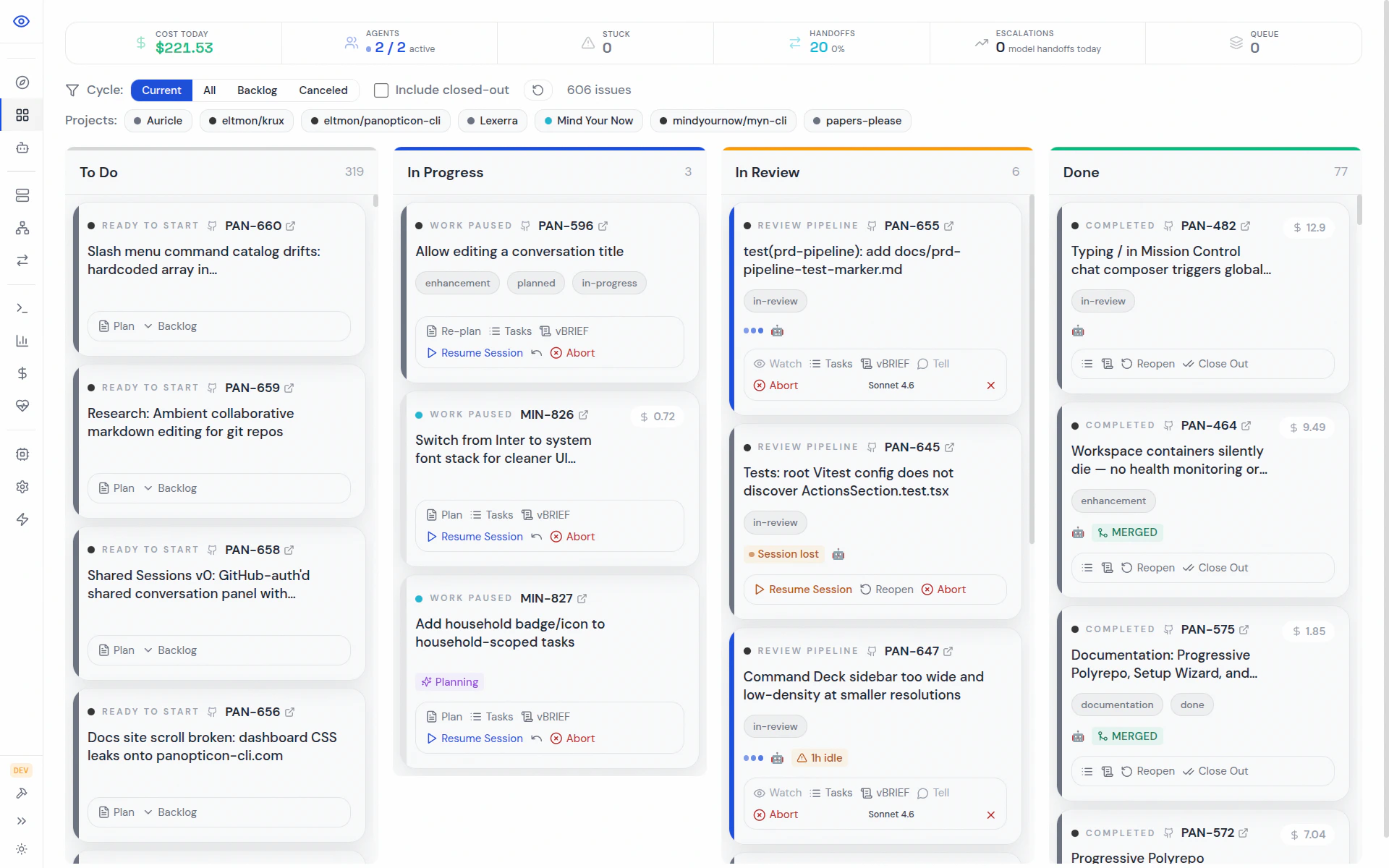

Viewing Specialist Status

Related Guides

- Cloister — the lifecycle manager that coordinates specialists

- Convoys — parallel specialist execution for code review

- Agent Commands — full CLI reference for working with agents